ML Data Analytics

Backend Service, API

Project Summary

A data aggregation API built for enterprise use. Provides real-time data synchronization capabilities and transforms raw inputs into structured analytics layers.

Enables operational reporting, pattern recognition, and metric tracking across multiple data sources. Supports filtering, segmentation, and export functionality for integration with business intelligence tools.

Architected for high availability with distributed processing, automated scheduling, and data consistency guarantees. Handles backfilling and incremental updates efficiently.

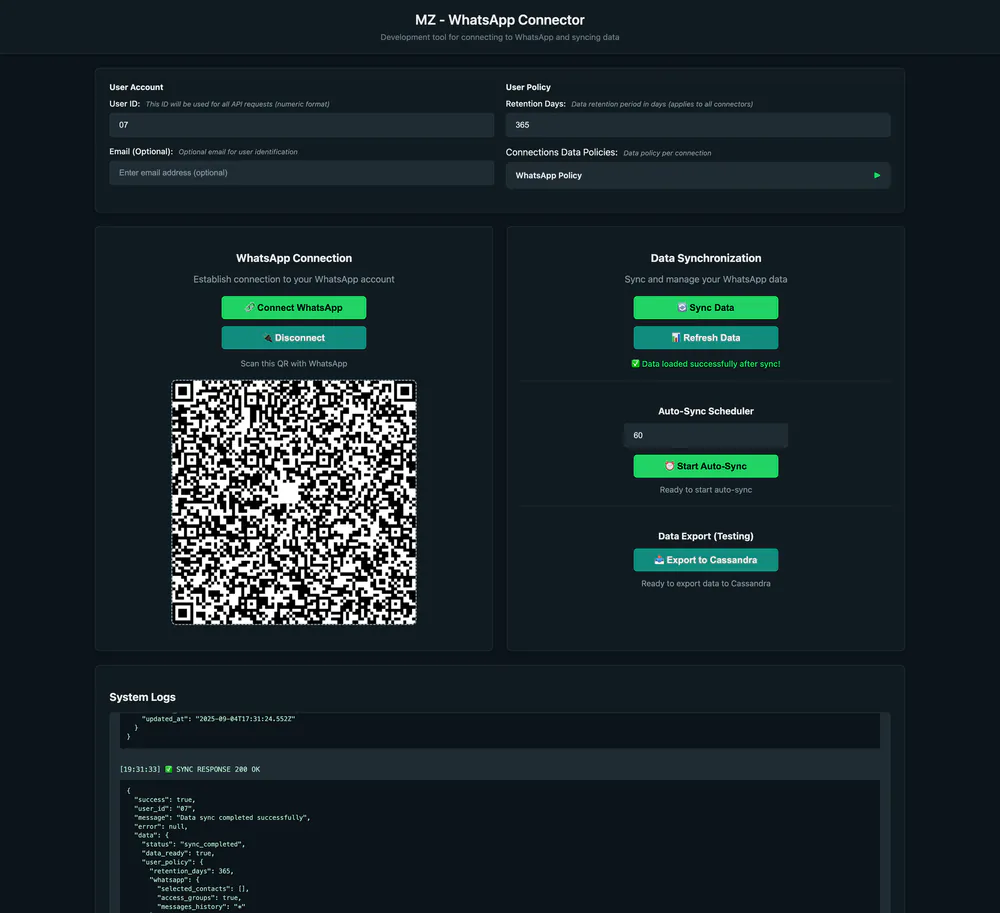

The screenshots display a developer utility built for one connector within this microservice, an internal tool to simplify onboarding and system interaction during development.

Case Study

Overview

Built a data aggregation and analytics service that syncs conversation data across channels, normalizes it into an analytics layer, and powers reporting plus chat-style exploration.

Problem

Teams were stuck exporting conversation data manually, cleaning it by hand, and stitching reports across tools. Metrics were inconsistent, insights were delayed, and it was hard to ask questions across all conversations in one place.

Goals

- Sync multi-channel conversation data with <5 minute lag for active connectors.

- Normalize events into a unified schema with >95% field coverage.

- Generate standard reports in under 5 minutes without manual exports.

- Enable chat-style queries over the analytics layer for faster answers.

- Provide a developer-facing utility to validate connectors and sync health.

Approach

- Chose an event-driven pipeline (Kafka) to decouple ingestion from analytics, trading some operational complexity for resilient backfills.

- Used GoLang for high-throughput connectors and Cassandra for scalable time-series storage, while keeping PostgreSQL for metadata and policy state.

- Added a lightweight internal utility (VueJS) as the “eye” into the service for connector QA and debug, instead of building a full admin product.

- Prioritized observability (Grafana, Loki, Tempo, OpenTelemetry) to trace data lineage and sync health end-to-end.

Solution

A distributed analytics service with connector sync, normalization, retention policies, report generation, and a chat-style query layer; plus an internal dev utility to trigger syncs, inspect logs, and preview ingested data.

Outcomes

- Reduced report prep time from ~1-2 days of manual exports to ~1-2 hours.

- Delivered consistent metrics across channels with unified definitions and schema.

- Cut connector QA time by >70% using the internal utility for quick validation.

Key Metrics

Timeline

Challenges

- Keeping sync consistent across retries, backfills, and partial failures.

- Aligning metrics across sources without losing source-specific context.

- Balancing internal tooling speed with production-grade observability.

معلومات المشروع

التقنيات المستخدمة

Private stack – contact for info