Free AI Content Detector

Paste any text and get an instant probability score of whether it was written by AI or a human. Uses a RoBERTa-based classifier model trained by OpenAI, running entirely in your browser via Transformers.js. No signup, no server, no API calls. Your text stays on your device.

Loading...

How Does AI Content Detection Work?

AI content detectors work by analyzing patterns in text that distinguish human writing from machine-generated output. This tool uses a RoBERTa-based classifier model that was fine-tuned by OpenAI specifically for detecting AI-generated text. It examines statistical properties like token probability distributions, sentence structure uniformity, and vocabulary predictability to produce a confidence score.

Unlike cloud-based detectors like GPTZero, Turnitin AI Detection, or Originality.ai that require you to upload your text to their servers, this tool runs the entire model locally in your browser via Transformers.js. Your text never leaves your device — making it safe to check sensitive academic papers, confidential documents, or private content without privacy concerns.

The model achieves approximately 95% accuracy on GPT-2 generated text. Accuracy may vary with newer AI models like GPT-4, Claude, or Gemini, as detection becomes harder as language models improve. For best results, provide at least 50 words — longer samples produce significantly more reliable predictions. No AI detector is perfect, and results should be considered one signal among many.

Need expert help with AI?

Looking for a specialist to help integrate, optimize, or consult on AI systems? Book a one-on-one technical consultation with an experienced AI consultant to get tailored advice.

Get a Personal AI Assistant

Hire an AI assistant for scheduling, reminders, inbox triage, daily coordination and more. No-code setup, fully customizable, and ready to help you save time and stay organized. Works 24/7 without breaks or burnout.

More Free Tools

More than 20 free AI tools.

Q&A SESSION

Got a quick technical question?

Skip the back-and-forth. Get a direct answer from an experienced engineer.

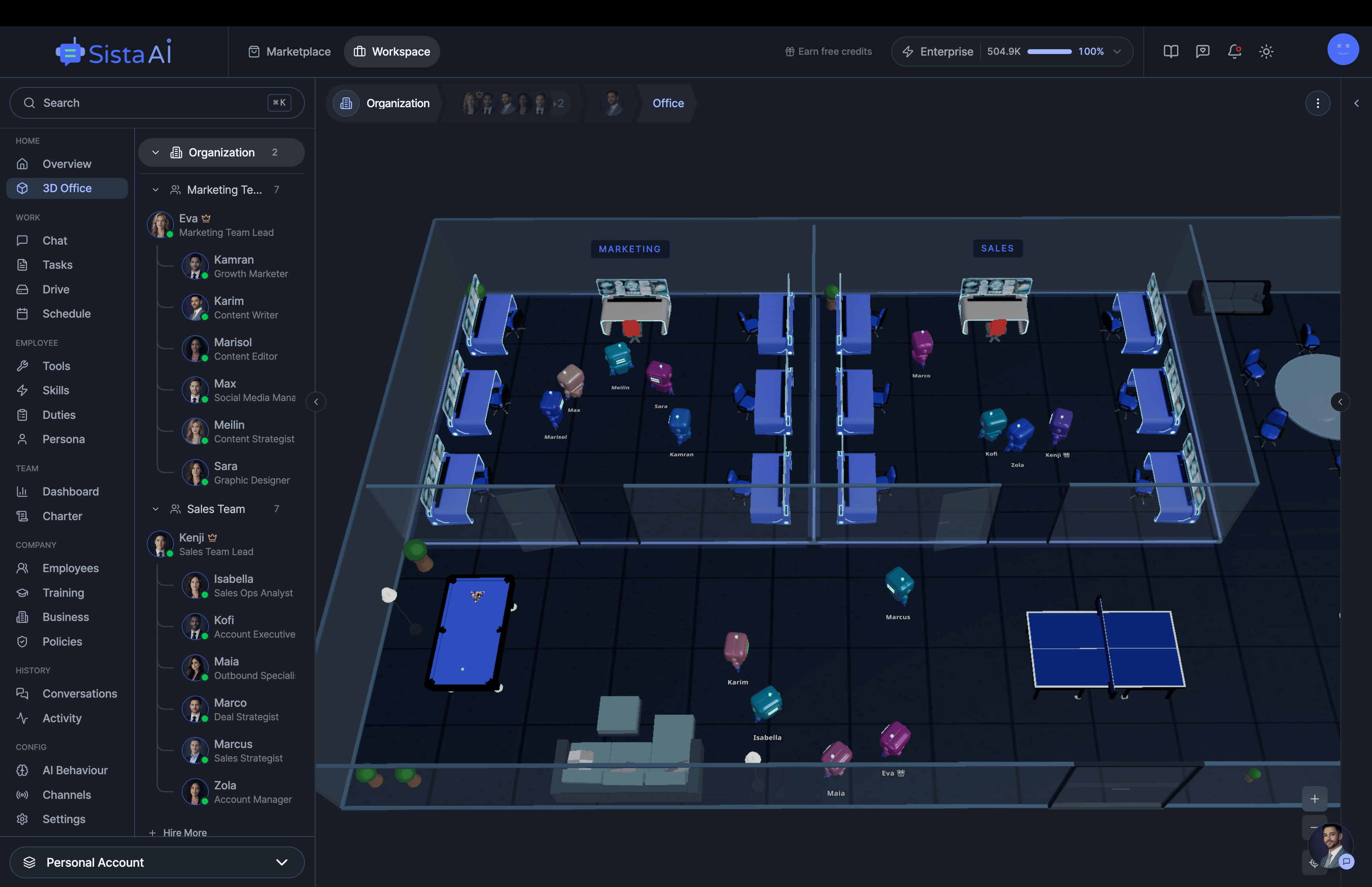

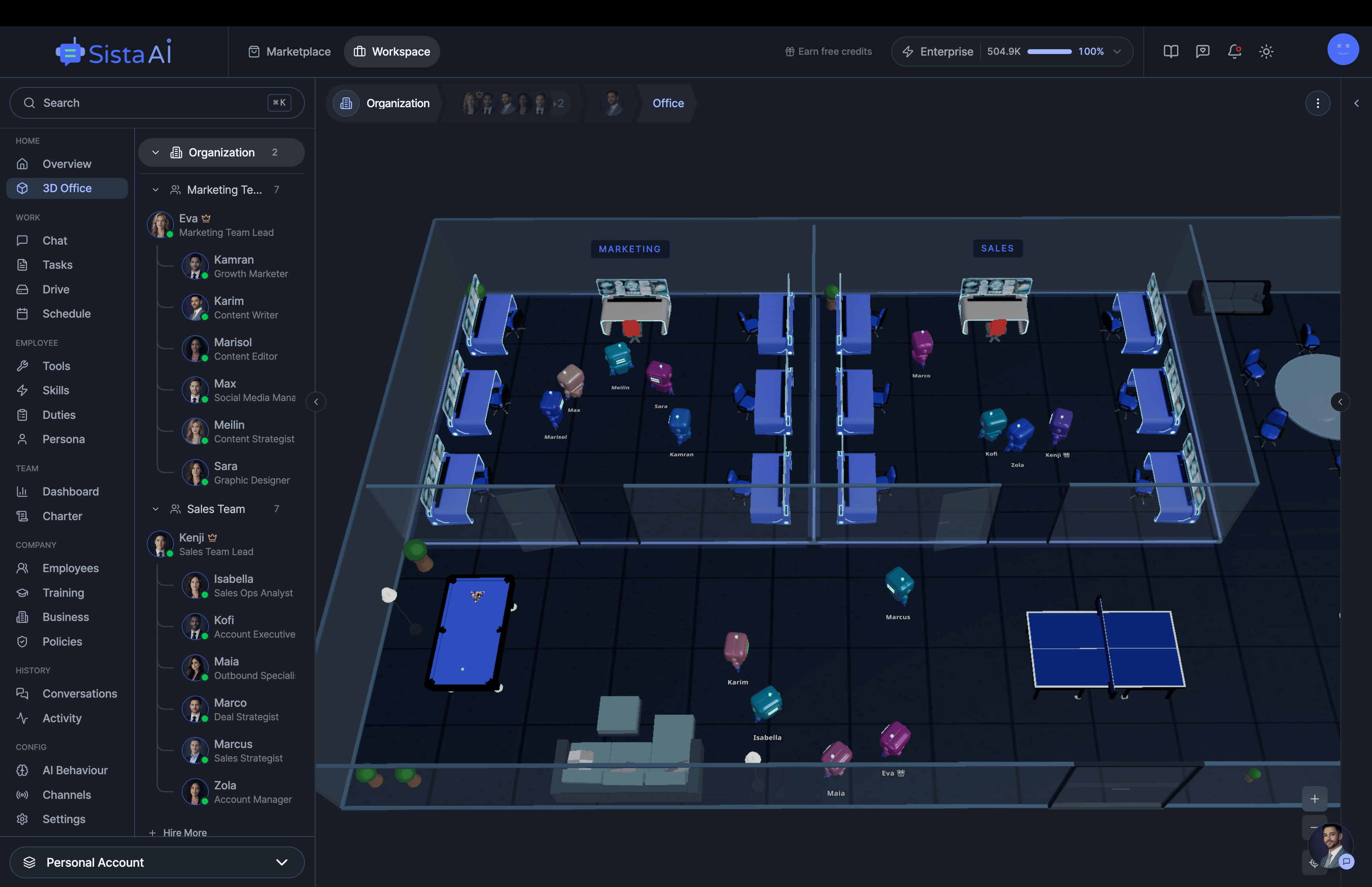

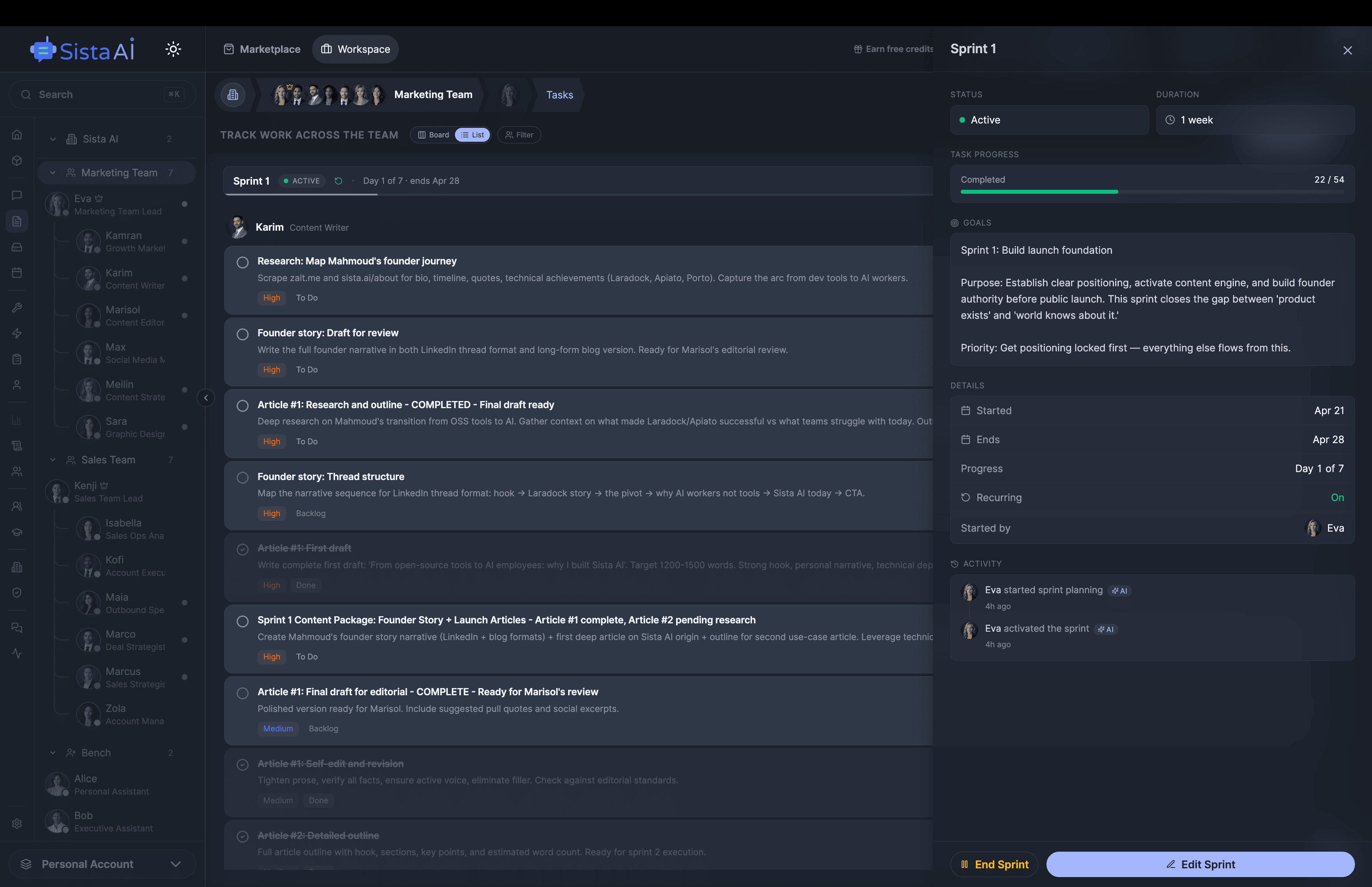

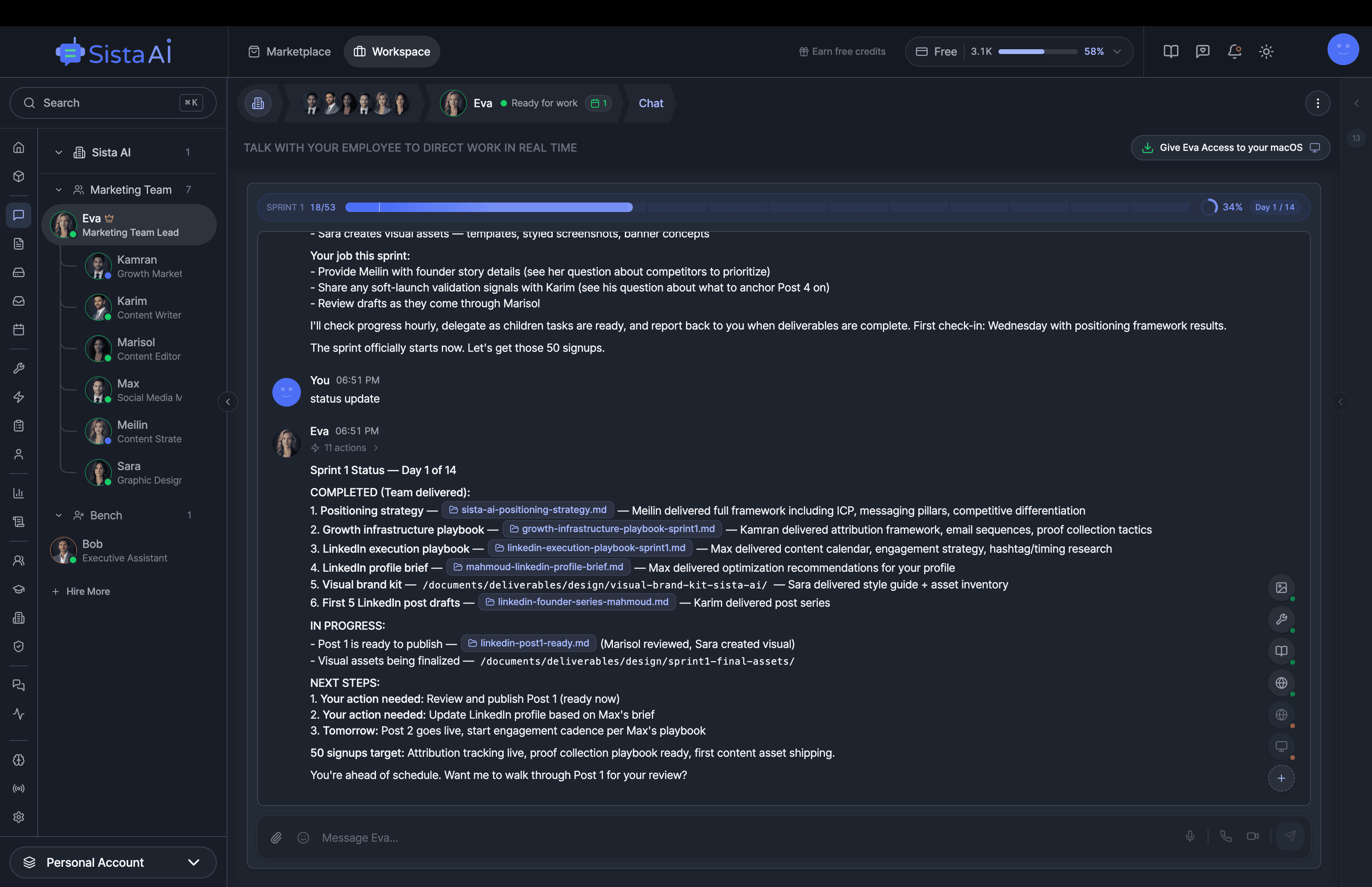

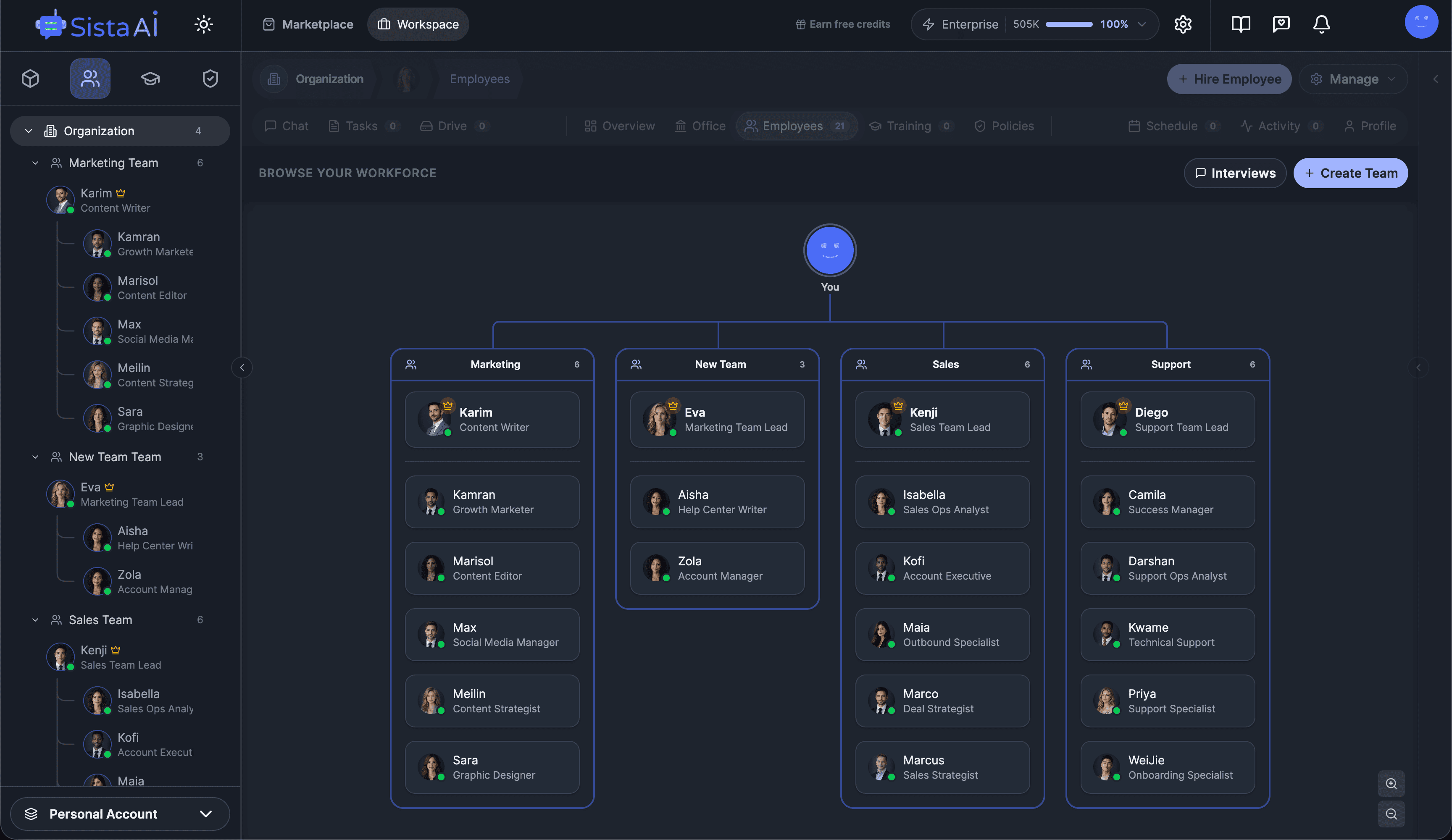

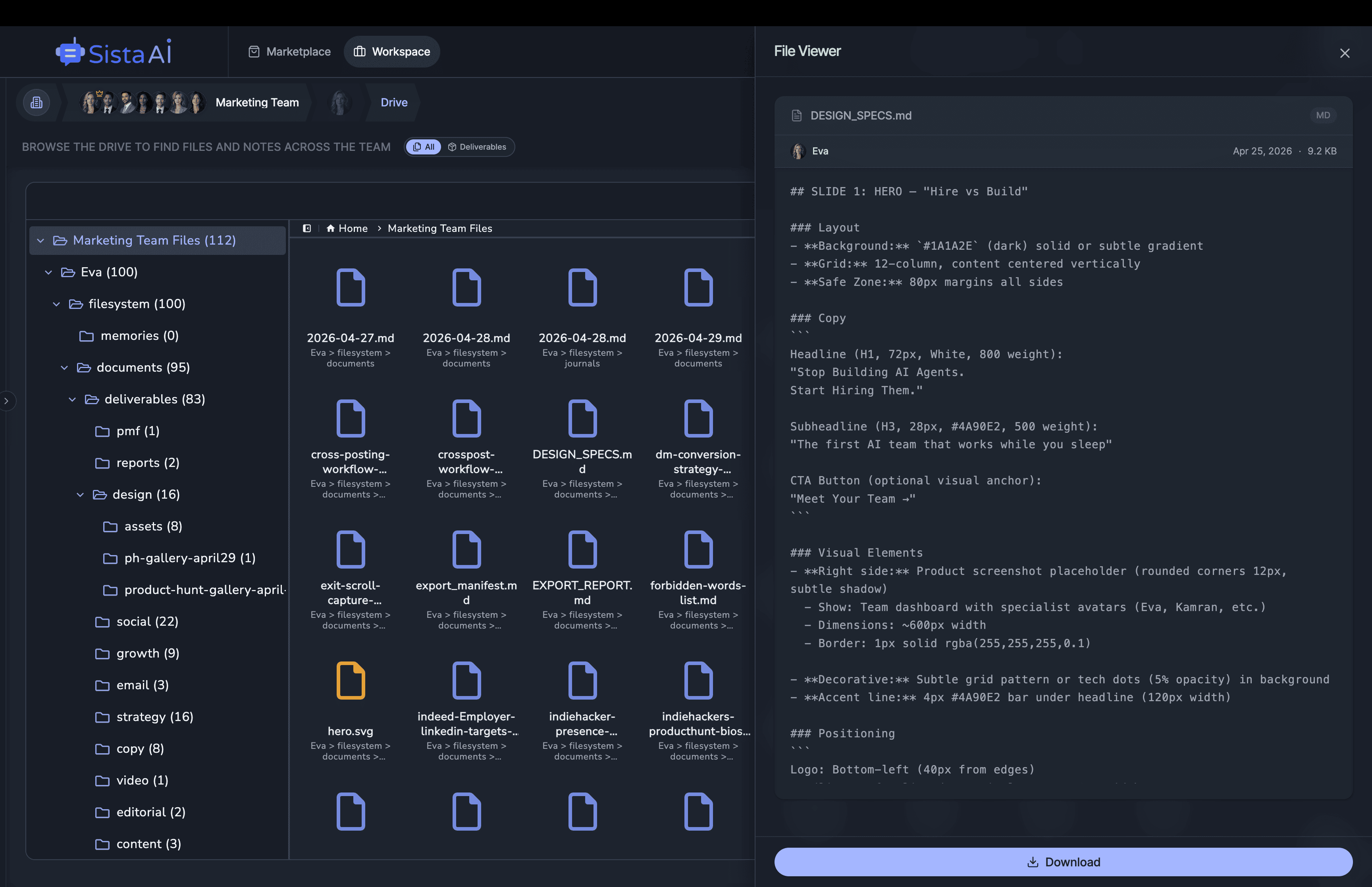

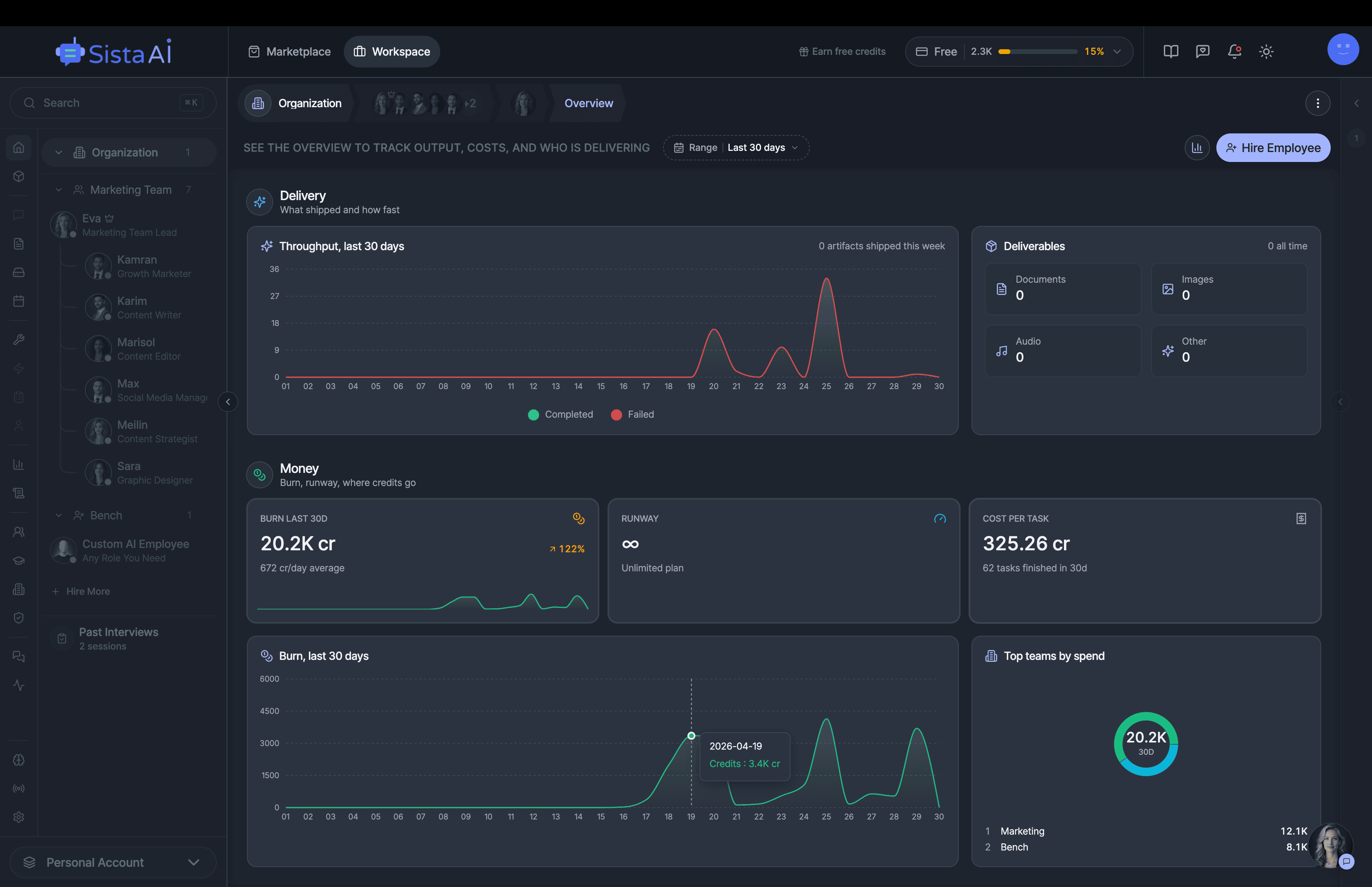

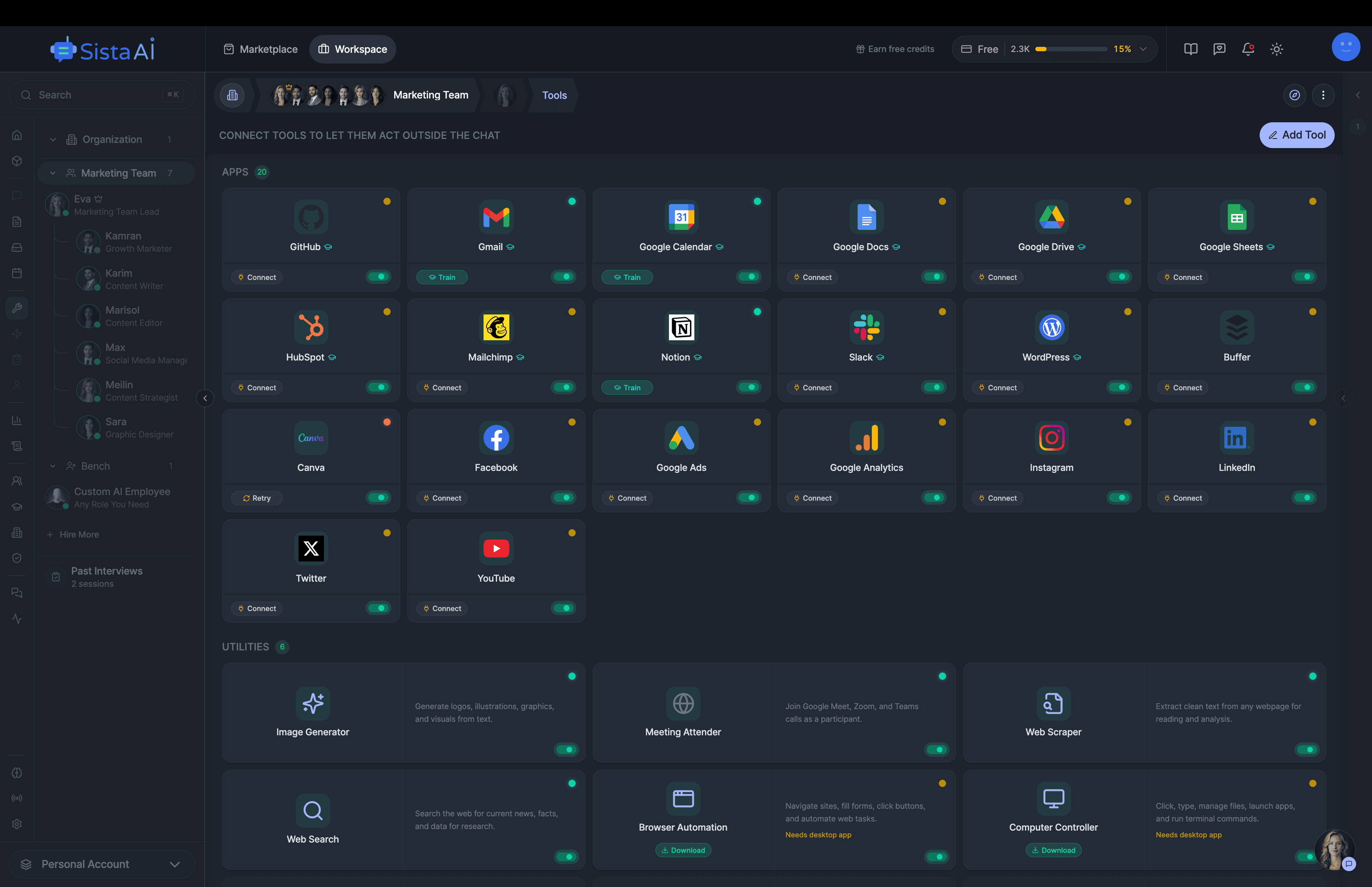

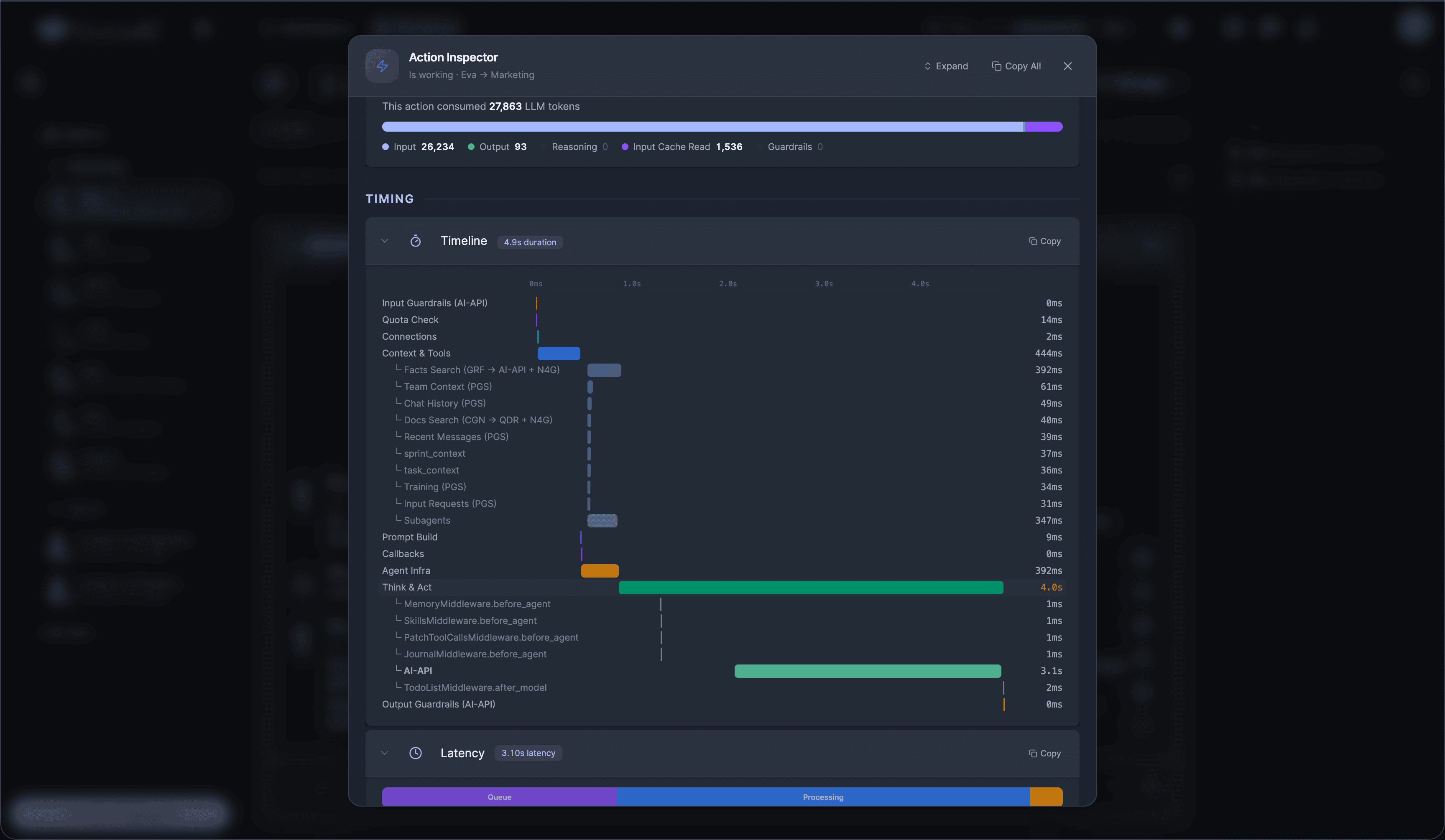

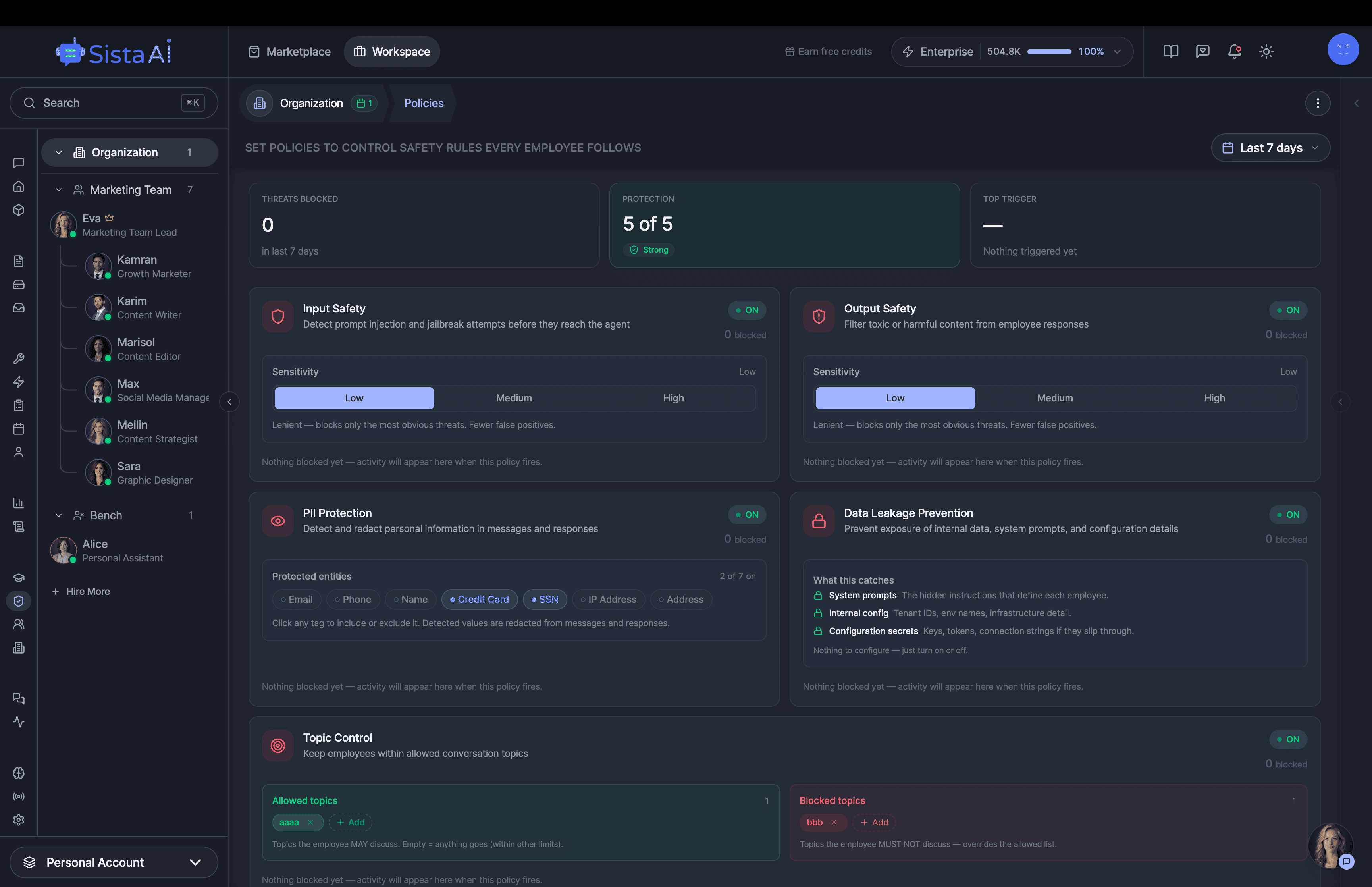

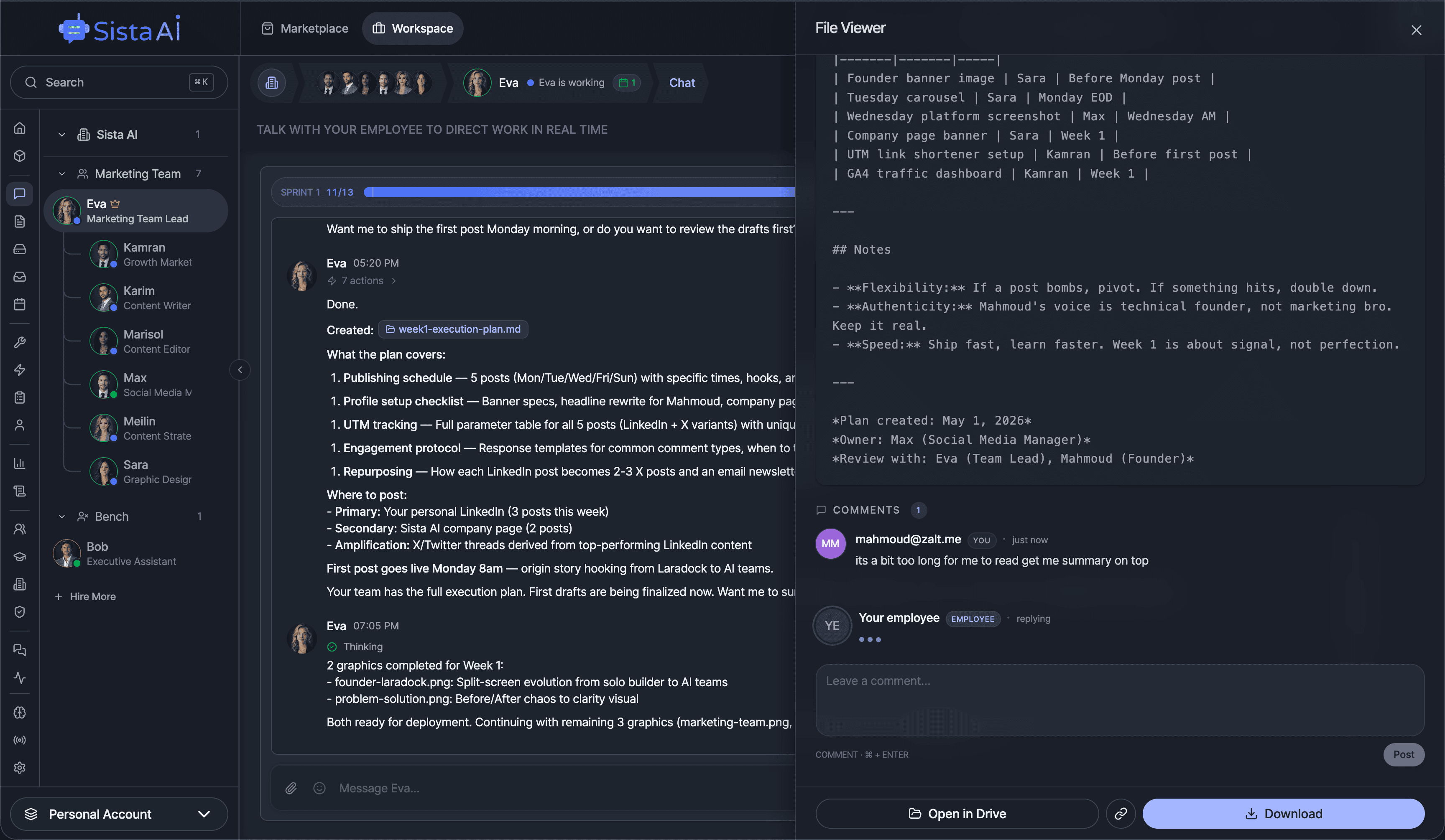

Hire Teams of AI Employees

Trained teams of AI employees that work in sprints and follow OKRs to deliver real results. While you focus on strategy.

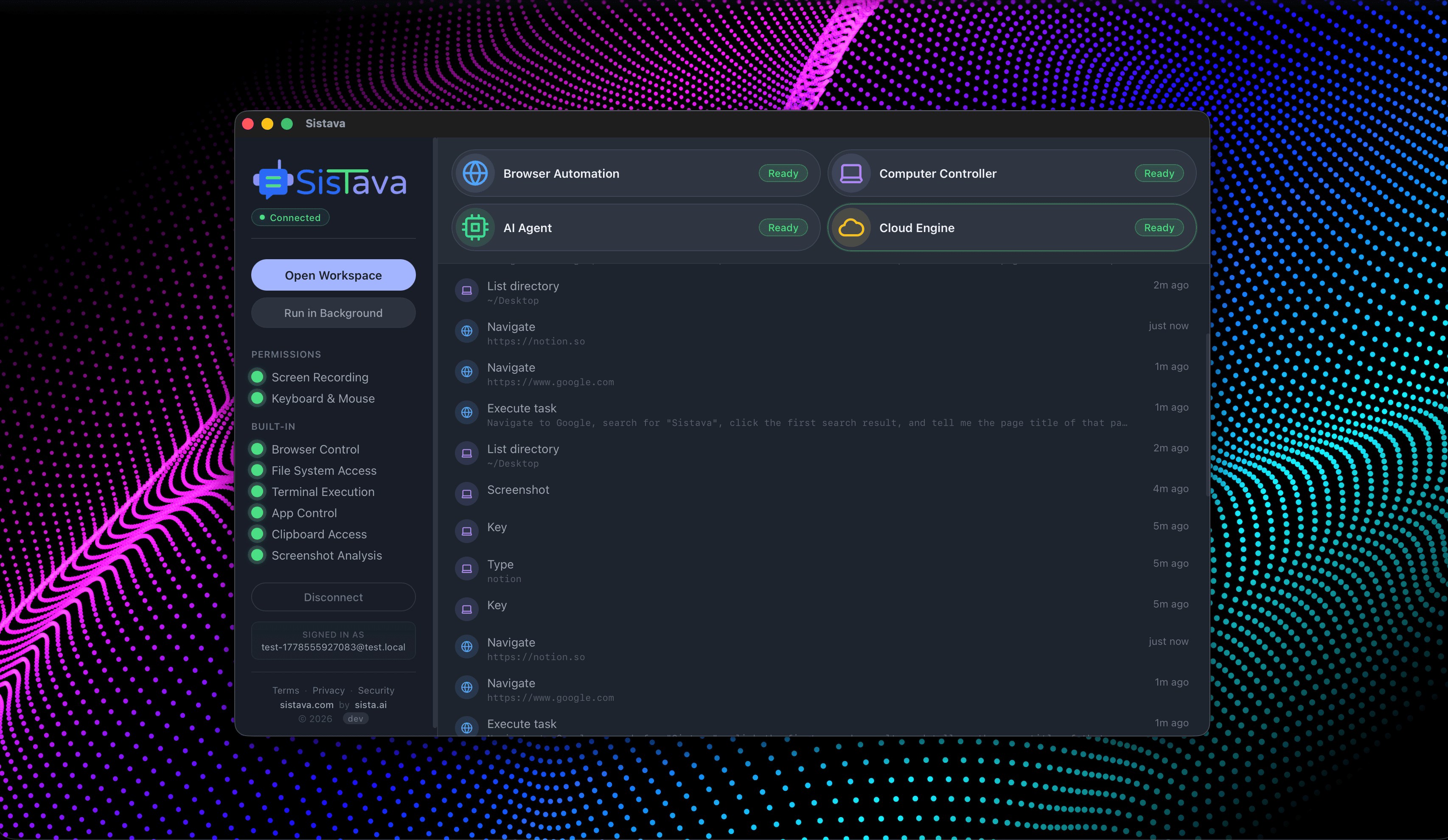

- Control your computer with natural language

- Automate any browser workflow end-to-end

- Attend and summarize your meetings

- Run teams of AI workers that collaborate in sync

- 3D office view to visualize and manage your AI workforce

How It Works

Paste or type the text you want to analyze.

Click Detect — the AI model classifies the text locally in your browser.

See the probability score of AI-generated vs human-written content.

Automated content moderation.

Every piece of content checked before anyone sees it. Detection at scale.

Key Features

Privacy & Trust

Use Cases

Limitations

- Detection accuracy varies with text length and style

- Works best with English text of 50+ words

- No AI detector is 100% accurate

- Model download is ~350MB on first use

- Short texts produce less reliable results